The Little Engine that Will

Not too many have heard of NVIDIA, but it will power most of our technology for years to come

Technology can be hard to use sometimes. People devote their entire lives to making sure that using a computer, application, or website is a good experience. But UX design has only taken off in the last decade, early website looked a lot like Craigslist. More often than not, the companies that succeed among today’s competition have the most intuitive design and best user experience.

(Source: NVIDIA)

What’s even harder than making technology work, especially as it starts to cross new frontiers like virtual reality or AI, is making the computers and servers behind that technology work. A few months ago I wrote about semiconductors and how they are the little engine that makes our computers go. Laptops, cars, cell phones, and more all rely on semis to make them go. Without them, we can’t do many of the things that we’d actually like to do with electronic devices. You’ve likely heard of the recent supply chain issues and their impact to production of gaming devices, cars, etc. Semis are a limiting factor in the growth of our technology as we know it.

But focusing on current issues is less exciting than talking about future potential. Semis are going to be critical to the development of AI, crypto, virtual/augmented reality, robotics, and so much more. Chips right now can run Artificial Neural Network (ANN) applications, for example, but it takes a specially designed chip to reach peak performance. These specially designed chips are some of, if not the, most difficult to design items in the world. To borrow a word from the Acquired podcast, albeit about a different company in semis, designing and producing semiconductor chips is literally “alchemy”.

Semiconductor manufacturing is one of the most advanced processes in human history that literally gets down to moving electrons with lasers. It’s entirely dependent on math, engineering, and science all being applied to already extremely complex manufacturing. It’s also extremely capital intensive. Machines that make semiconductors cost millions of dollars and have to be transported using over 5 jets. The rooms that chips are manufactured in are thousands of times cleaner than hospitals.

By that logic, the company that can create the most advanced semiconductors for the widest array of emerging tech use cases should be poised for success, right? Right. Enter NVIDIA, the semi industry’s future-tech stalwart.

(Source: NVIDIA)

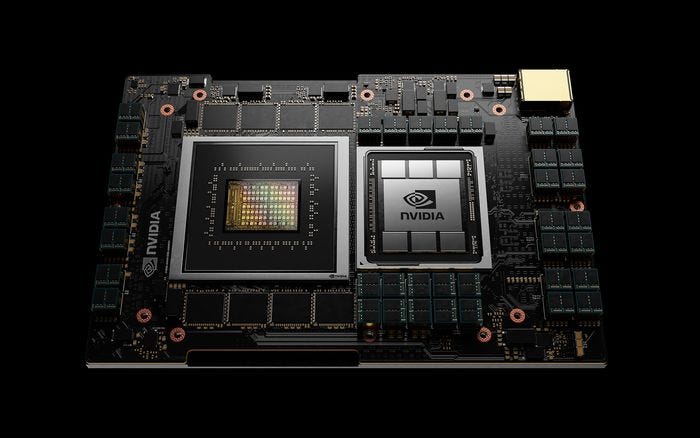

NVIDIA popularized what we know as the GPU, today, in 1999. GPUs are the specialized circuits that alter computer memory quickly to create images in real-time in video games. The reason we don’t still play PacMan or Mario 64 anymore is because of the advent of the GPU. It changed the game (nice). As NVIDIA puts it, “GPUs are the souls of the PC. But over the last decade, they’ve broken out of the boxy confines of the PC”. GPUs work by breaking complex problems into millions of separate tasks and work them all out at once. That’s ideal when changing the graphics and pixels of a screen. Or deep faking a portion of your own keynote speech.

It’s also ideal when considering additional technologies outside gaming on PCs, Playstations, etc. Consider how AI/ML works. Thousands to billions of datapoints are being trained so that a software program can run using information that exists and then adapt. GPUs are the key to that neural network, specifically through what’s called “deep learning”. Deep learning enables the performance of tasks, that are way too complicated for engineers to write, by neural networks. NVIDIA has made deep learning possible through its addition of Tensor Cores in its GPUs. Tensor Cores further accelerate the operations of its GPUs matrix operations or the many “cells” working in parallel, like the green in the photo above. NVIDIA recently pushed an update that enables people to train devices like Alexa with human speech qualities, such as rhythm and intonation, with their own voice for improvement.

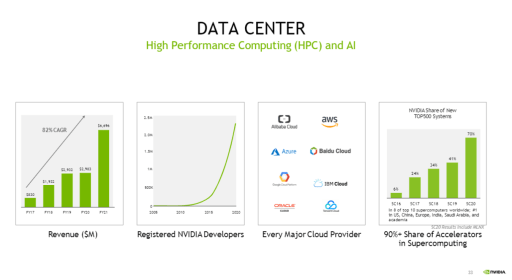

(Source: NVIDIA Investor Presentation)

Similarly, NVIDIA’s GPUs can are used in cars to create imagery in dashboards and computers. But they’re also critical to the development of self-driving (AV DRIVE chip) cars thanks to their acceleration of the cars’ learning. The same can be said for robotics, where machines need to perceive their environment. Additionally in healthcare, where millions of datapoint can be analyzed for just a single person through the Clara chip, and others. Their chips, such as the A100 (8th Gen series), can be deployed for existing use cases as well. The A100 was designed specifically for data centers. It’s specialties are high performance computing (HPC) and inference processes. NVIDIA is used by eight of the top ten supercomputers in the world, and over 300 of the top 500. What makes NVIDIA special is that it’s got dozens of chips that are applicable to niche purposes.

(Source: NVIDIA Investor Presentation)

The addition of their data center chips have made up “the lion’s share” of their $500MM revenue increase last quarter according to Colette Kress, NVIDIA’s CFO. Another interesting bolster to NVIDIA’s top line has been their Cryptocurrency Mining Processors (CMPs), which cleverly were developed to sit alongside gaming GPUs and minimize revenue cannibalization. Given the huge growth of interest in cryptocurrencies, NFTs, and more over the last year, CMPs did just fine. [NVIDIA rode the crypto tailwinds to $266MM in CMP revenue. The varied business drivers of NVIDIA, it’s easy to see how entrenched their growing moat really is.

(Source: NVIDIA Investor Presentation)

One of NVIDIA’s biggest differentiators is CUDA, it’s proprietary toolkit used to develop, optimize, and deploy applications across almost any technology that uses NVIDIA GPUs. Similarly, NVIDIA has a software development kit (SDK) named Merlin that helps developers build AI-based recommendation systems. Merlin can allegedly “reduce the time it takes to create a recommender system from a 100-terabyte dataset (1563 64 GB filled completely with data) to 20 minutes from four days.”

While NVIDIA has put significant effort on building things internally, they haven’t overruled buying. One of the biggest tech sagas of the year has been NVIDIA’s stalled efforts to acquire ARM, another semiconductor and software company. ARM designs CPUs and other chips, but is primarily known for being the market leading chip processor for mobile phones, tablets, and TVs. Criticism has surfaced thanks to ARM’s open-faced business model that allows the licensing of its chips for company-specific needs. With ARMs chips deployed at “the edge”, aka individuals, NVIDIA has the chance to both own the edge and data centers that power it. Edge computing is the second piece of what’s called inference. Inference is using data to train models to interpret things like images (aka in augmented reality or autonomous vehicles). Most of the training for inference happens at data centers happens at data centers, but some happens at the edge as well. As discussed above, NVIDIA would own the chips up and downstream. Owning a significant portion of the chips powering AI. That’s a moat.

There is a nonzero percentage that the deal doesn’t go through. Acquiring ARM would make NVIDIA a monster. One with significant control of the AI chip market. But even should that fail, NVIDIA is increasingly well positioned to dominate the AI chip market for years to come, among others. NVIDIAs GPUs will continue to lead gaming and crypto mining for years to come as well. Sustained profits, diversified and growing revenue streams, strong Free-Cash-Flow, and the potential of the ARM acquisition set NVIDIA ahead of the pack and deeply entrenched as a market leader.

*Disclaimer: I own shares of NVIDIA and have a long position.